At IFA in Berlin, Europe’s biggest consumer electronics show, there is no doubting that the vinyl revival is real.

At times it did feel like going back in time. On the Teac stand there were posters for Led Zeppelin and The Who, records by Deep Purple and the Velvet Underground, and of course lots of turntables.

Why all the interest in vinyl? Nostalgia is a factor but there is a little more to it. A record satisfies a psychological urge to collect, to own, to hold a piece of music that you admire, and streaming or downloading does not meet that need.

There is also the sound. At its best, records have an organic realism that digital audio rarely matches. Sometimes that is because of the freedom digital audio gives to mastering engineers to crush all the dynamics out of music in a quest to make everything as LOUD as possible. Other factors are the possibility of euphonic distortion in vinyl playback, or that excessive digital processing damages the purity of the sound. Records also have plenty of drawbacks, including vulnerability to physical damage, dust which collects on the needle, geometric issues which means that the arm is (most of the time) not exactly parallel to the groove, and the fact that he quality of reproduction drops near the centre of the record, where the speed is slower.

Somehow all these annoyances have not prevented vinyl sales from increasing, and audio companies are taking advantage. It is a gift for them, some slight relief from the trend towards smartphones, streaming, earbuds and wireless speakers in place of traditional hi-fi systems.

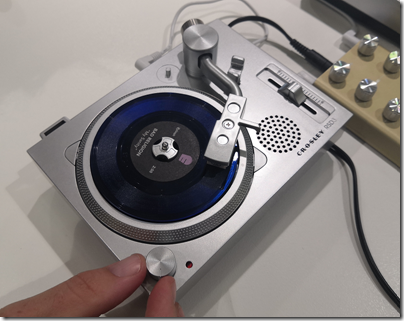

One of the craziest things I saw at IFA was Crosley’s RDS3, a miniature turntable too small even for a 7” single. It plays one-sided 3” records of which there are hardly any available to buy.Luckily it is not very expensive, and is typically sold on Record Store Day complete with a collectible 3” record which you can play again and again.

Moving from the ridiculous to the sublime, I was also intrigued by Yamaha’s GT-5000. It is a high-end turntable which is not yet in full production. I was told there are only three in existence at the moment, one on the stand at IFA, one in a listening room at IFA, and one at Yamaha’s head office in Japan.

Before you ask, price will be around €7000, complete with arm. A lot, but in the world of high-end audio, not completely unaffordable.

There was a Yamaha GT-2000 turntable back in the eighties, the GT standing for “Gigantic and Tremendous”. Yamaha told me that engineers in retirement were consulted on this revived design.

The GT-5000 is part of a recently introduced 5000 series, including amplifier and loudspeakers, which takes a 100% analogue approach. The turntable is belt drive, and features a very heavy two-piece platter. The brass inner platter weights 2kg and the aluminium outer platter, 5.2kg. The high mass of the platter stabilises the rotation. The straight tonearm features a copper-plated aluminium inner tube and a carbon outer tube. The headshell is cut aluminium and is replaceable. You can adjust the speed ±1.5% in 0.1% increments. Output is via XLR balanced terminals or unbalanced RCA. Yamaha do not supply a cartridge but recommend the Ortofon Cadenza Black.

Partnering the GT-5000 is C-5000 pre-amplifier, the M-5000 100w per channel stereo power amplifier, and NS-5000 three-way loudspeakers. Both amplifiers have balanced connections and Yamaha has implemented what it calls “floating and balanced technology”:

Floating and balanced power amplifier technology delivers fully balanced amplification, with all amplifier circuitry including the power supply ‘floating’ from the electrical ground … one of the main goals of C-5000 development was to have completely balanced transmission of phono equaliser output, including the MC (moving coil) head amp … balanced transmission is well-known to be less susceptible to external noise, and these qualities are especially dramatic for minute signals between the phono cartridge and pre-amplifier.

In practice I suspect many buyers will partner the GT-5000 with their own choice of amplifier, but I do like the pure analogue approach which Yamaha has adopted. If you are going to pretend that digital audio does not exist you might as well do so consistently (I use Naim amplifiers from the eighties with my own turntable setup).

I did get a brief chance to hear the GT-5000 in the listening room at IFA. I was not familiar with the recording and cannot make meaningful comment except to say that yes, it sounded good, though perhaps slightly bright. I would need longer and to play some of my own familiar records to form a considered opinion.

What I do know is that if you want to play records, it really is worth investing in a high quality turntable, arm and cartridge; and that the pre-amplifier as well is critically important because of the low output, especially from moving coil cartridges.

GT-5000 arm geometry

There is one controversial aspect to the GT-5000 which is its arm geometry. All tonearms are a compromise. The ideal tonearm has zero friction, perfect rigidity, and parallel tracking at all points, unfortunately impossible to achieve. The GT-5000 has a short, straight arm, whereas most arms have an angled headshell and slightly overhang the centre of the platter. The problem with a short, straight arm is that it has a higher deviation from parallel than with a longer arm and angled headshell, so much so that it may only be suitable for a conical stylus. On the other hand, it does not require any bias adjustment, simplifying the design. With a straight arm, it would be geometrically preferable to have a very long arm but that may tend to resonate more as well as requiring a large plinth. I am inclined the give the GT-5000 the benefit of the doubt; it will be interesting to see detailed listening and performance tests in due course.

More information on the GT-5000 is here.